# TL; DR

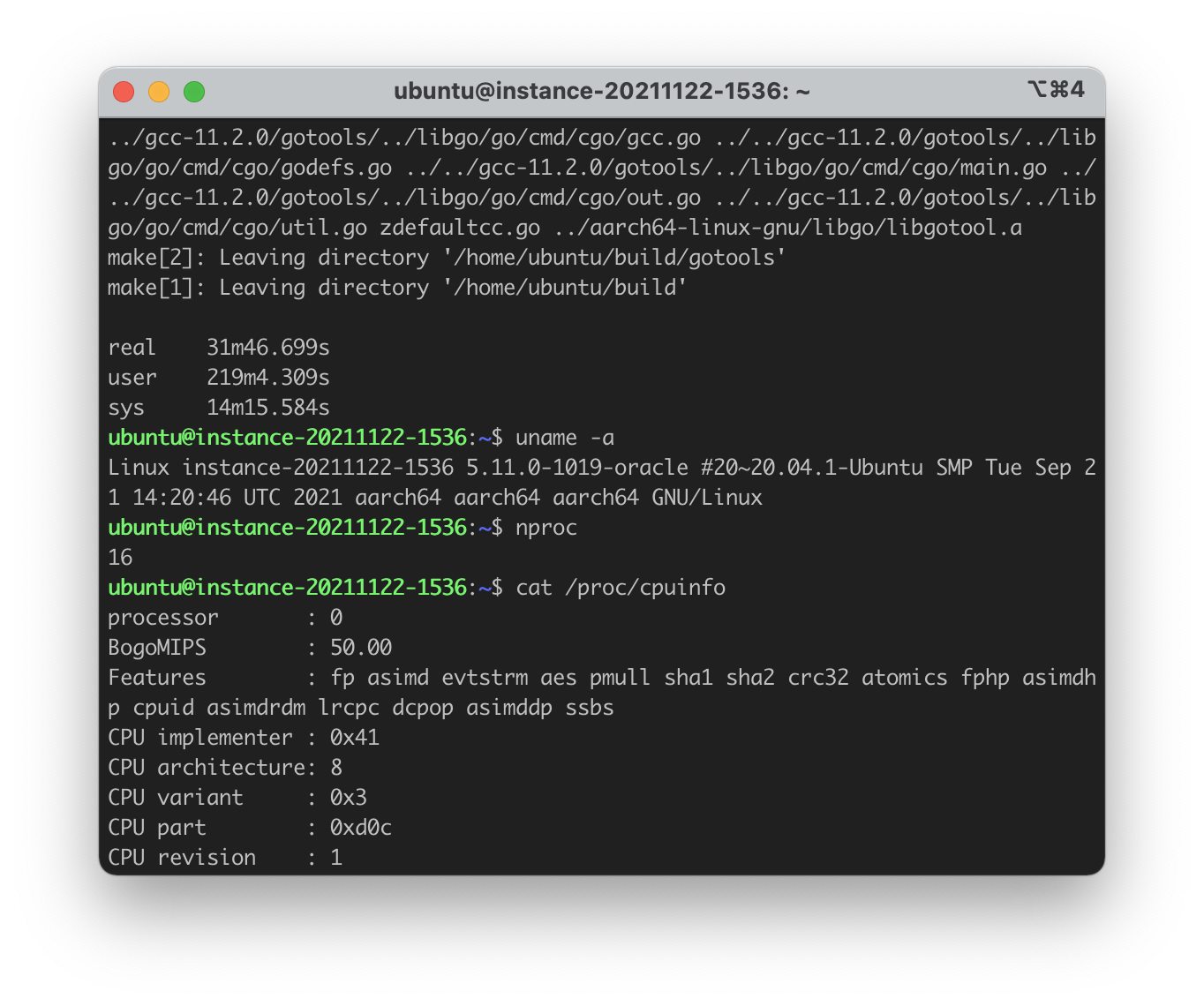

If an optional module requires you to use use Module in your code, you will need to check the availability of that module before defmodule and use Module:

# The condition here does have to be Code.ensure_loaded?(OptionalModule)

# It can be Application.compile_env(:my_app, :enable_optional_feature)

# or any other conditions that can be evaluted at compile-time

if Code.ensure_loaded?(OptionalModule) do

defmodule MyLibrary do

use OptionalModule, option: value

def my_function do

OptionalModule.function()

end

end

else

defmodule MyLibrary do

def my_function do

raise RuntimeError, """

`OptionalModule` does not exist. To use this feature,

please add `:optional_module` to the dependency list.

"""

end

end

endand following code won't work, although it looks simpler and is seemingly correct.

defmodule MyLibrary do

if Code.ensure_loaded?(OptionalModule) do

use OptionalModule, option: value

def my_function do

OptionalModule.function()

end

else

def my_function do

raise RuntimeError, """

`OptionalModule` does not exist. To use this feature,

please add `:optional_module` to the dependency list.

"""

end

end

end# Environment for This Post

At the time of writing, I'm using Elixir version v1.14.1. OTP version and operating system are not related to the things discussed below.

This behaviour is unlikely to change in the future, however, if you are using a future version of Elixir, please always verify the correctness/validity of the code and the conclusion in the post.

# A careless mistake?

A few hours ago I received an issue report related to evision v0.1.14 on elixirforum, and the issue can be reproduced in one line:

$ iex

iex> Mix.install({:evision, "== 0.1.14"})

...

==> evision

Compiling 179 files (.ex)

== Compilation error in file lib/smartcell/ml_traindata.ex ==

** (CompileError) lib/smartcell/ml_traindata.ex:3: module Kino.JS is not loaded and could not be found. This may be happening because the module you are trying to load directly or indirectly depends on the current module

(elixir 1.14.0) expanding macro: Kernel.use/2

lib/smartcell/ml_traindata.ex:3: Evision.SmartCell.ML.TrainData (module)

(elixir 1.14.0) expanding macro: Kernel.if/2

lib/smartcell/ml_traindata.ex:2: Evision.SmartCell.ML.TrainData (module)

could not compile dependency :evision, "mix compile" failed. Errors may have been logged above. You can recompile this dependency with "mix deps.compile evision", update it with "mix deps.update evision" or clean it with "mix deps.clean evision"And how did I fail to catch this, or how did this bug hide under the seemingly correct code? Is this simply a careless mistake or is there something deeper inside it? Let me unroll this story about if and use for you.

# Use if at Compile-time

Using if at compile-time is pretty common to see in Elixir. It is mainly used to define some functions under some conditions, for example

defmodule A do

if 1 < 2 do

def print, do: IO.puts("Yes, 1 < 2")

else

def print, do: IO.puts("Oh...")

end

end

A.printAnd if we run it, the expected behaviour is that the program prints Yes, 1 < 2 and exits.

$ mix run --no-mix-exs exmaple.exs

Yes, 1 < 2Also, we can verify that the elixir compiler will remove the false branch of the if-statement (if the condition can be determined at compile-time)

defmodule A do

if 1 < 2 do

def should_exist, do: IO.puts("ok")

else

def should_not_exist, do: IO.puts("what")

end

end

A.should_exist

A.should_not_exist$ mix run --no-mix-exs false-branch.exs

ok

** (UndefinedFunctionError) function A.should_not_exist/0 is undefined or private. Did you mean:

* should_exist/0

A.should_not_exist()

(elixir 1.14.0) lib/code.ex:1245: Code.require_file/2

(mix 1.14.0) lib/mix/tasks/run.ex:144: Mix.Tasks.Run.run/5Lastly, we can verify that if we use the return value of Code.ensure_loaded?/1, it will do the same thing as the above examples -- we can expect only the code under the true branch will be compiled:

# mix new ensure_loaded_example

# cd ensure_loaded_example

defmodule A do

if Code.ensure_loaded?(B) do

def has_b?, do: true

def should_not_exists, do: IO.puts("what")

else

def has_b?, do: false

def should_exists, do: IO.puts("ok")

end

endLet's try it in the IEx session, iex -S mix

$ iex -S mix

iex> A.has_b?

false

iex> defmodule B do

...> end

iex> A.has_b?

false

iex> A.should_exists

ok

:ok

iex> A.should_not_exists

** (UndefinedFunctionError) function A.should_not_exists/0 is undefined or private. Did you mean:

* should_exists/0

(task1b 0.1.0) A.should_not_exists()

iex:5: (file)# Apply What We Have Learnt So Far

Now, a quick background on the module that caused the compilation error: we'd like to write a module that implements Kino.SmartCell, and we'd like this to be an optional feature.

Therefore, we should check if Kino.SmartCell is loaded, if yes, we then follow kino's SmartCell tutorial, define our own functions and get the job done; otherwise, no functions will be defined in this module.

With the above idea in mind, we have the following code:

defmodule Evision.SmartCell.ML.TrainData do

if Code.ensure_loaded?(Kino.SmartCell) do

use Kino.JS, assets_path: "lib/assets"

# ...

end

endAt first glance, this code looks good to me because

Kino.SmartCellis built on top ofKino.JS. Therefore, ifKino.SmartCellis loaded, thenKino.JSmust be loaded too.- we use

useafter we have ensured thatKino.JSis loaded.

Of course, :kino is indeed listed in deps in evision's mix.exs file.

# What Went Wrong?

Well then, let's face the inevitable question -- what went wrong?

Apparently, the elixir compiler tried to evaluate the use-statement on line 3, although we expected the compiler to completely avoid evaluating this false branch. However, since the elixir compiler has to check all the code, including the code in the false branch, is syntactically correct, e.g., the following code will not pass the syntax check:

defmodule Foo do

if false do

Bar.(

end

end$ mix run --no-mix-exs foo.exs

** (SyntaxError) foo.exs:9:3: unexpected reserved word: end. The "(" at line 8 is missing terminator ")"

(elixir 1.14.0) lib/code.ex:1245: Code.require_file/2

(mix 1.14.0) lib/mix/tasks/run.ex:144: Mix.Tasks.Run.run/5

(mix 1.14.0) lib/mix/tasks/run.ex:84: Mix.Tasks.Run.run/1

(mix 1.14.0) lib/mix/task.ex:421: anonymous fn/3 in Mix.Task.run_task/4

(mix 1.14.0) lib/mix/cli.ex:84: Mix.CLI.run_task/2During this process, macros will be expanded. You might have already known that use is a macro. So yes, that's where the problem emerges.

Based on the information given in the Getting Started manual on elixir-lang.org, the following code

defmodule A do

use Kino.JS, assets: "lib/assets"

endwill be compiled into

defmodule A do

require Kino.JS

Kino.JS.__using__(assets: "lib/assets")

endSince __using__ is a macro, we have to call require to bring in all the macros defined in that module.

You see, if the application :kino is optional and the user didn't list :kino in their deps, then require will surely fail because it cannot find the Kino.JS module let alone the macros defined in Kino.JS.

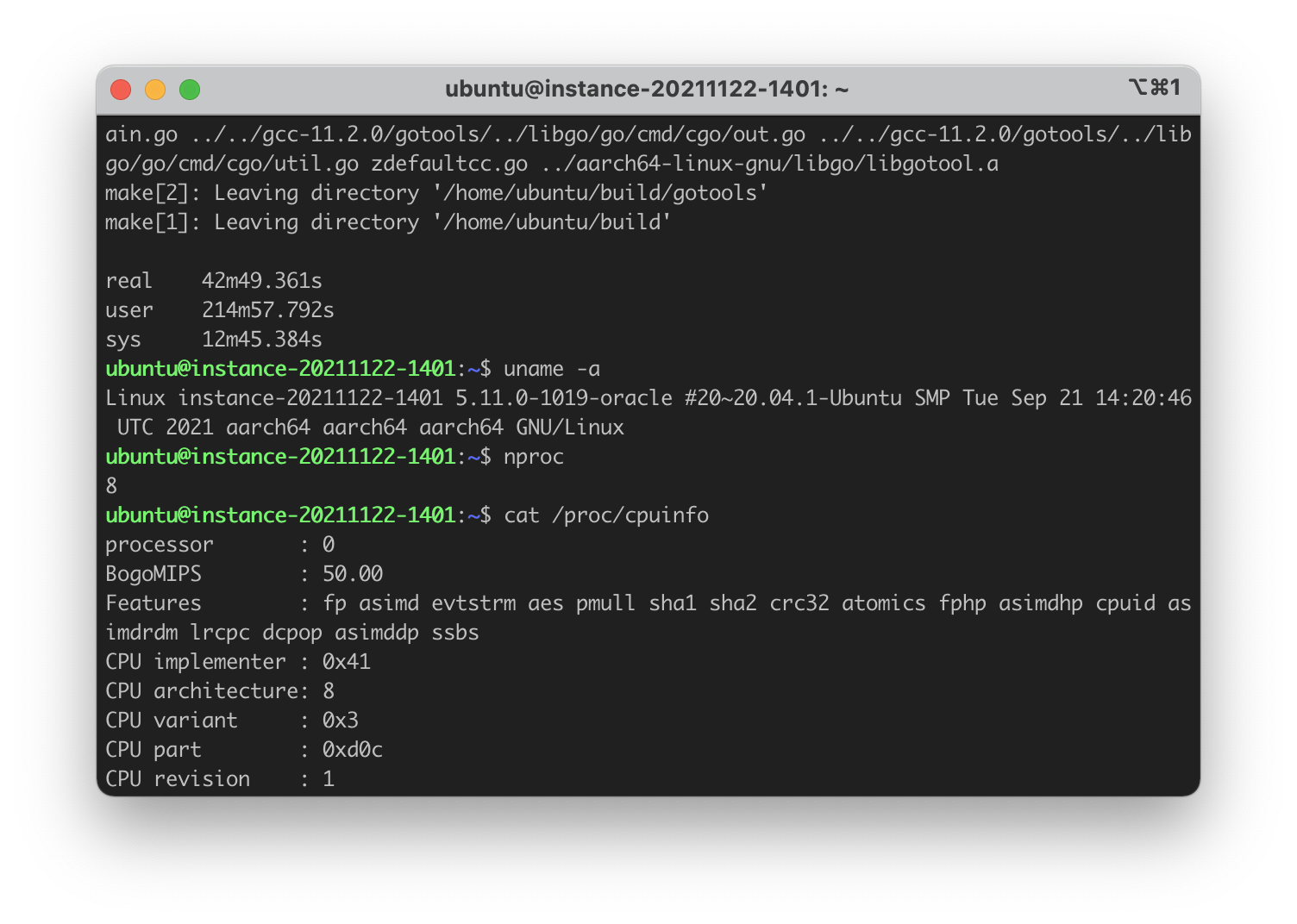

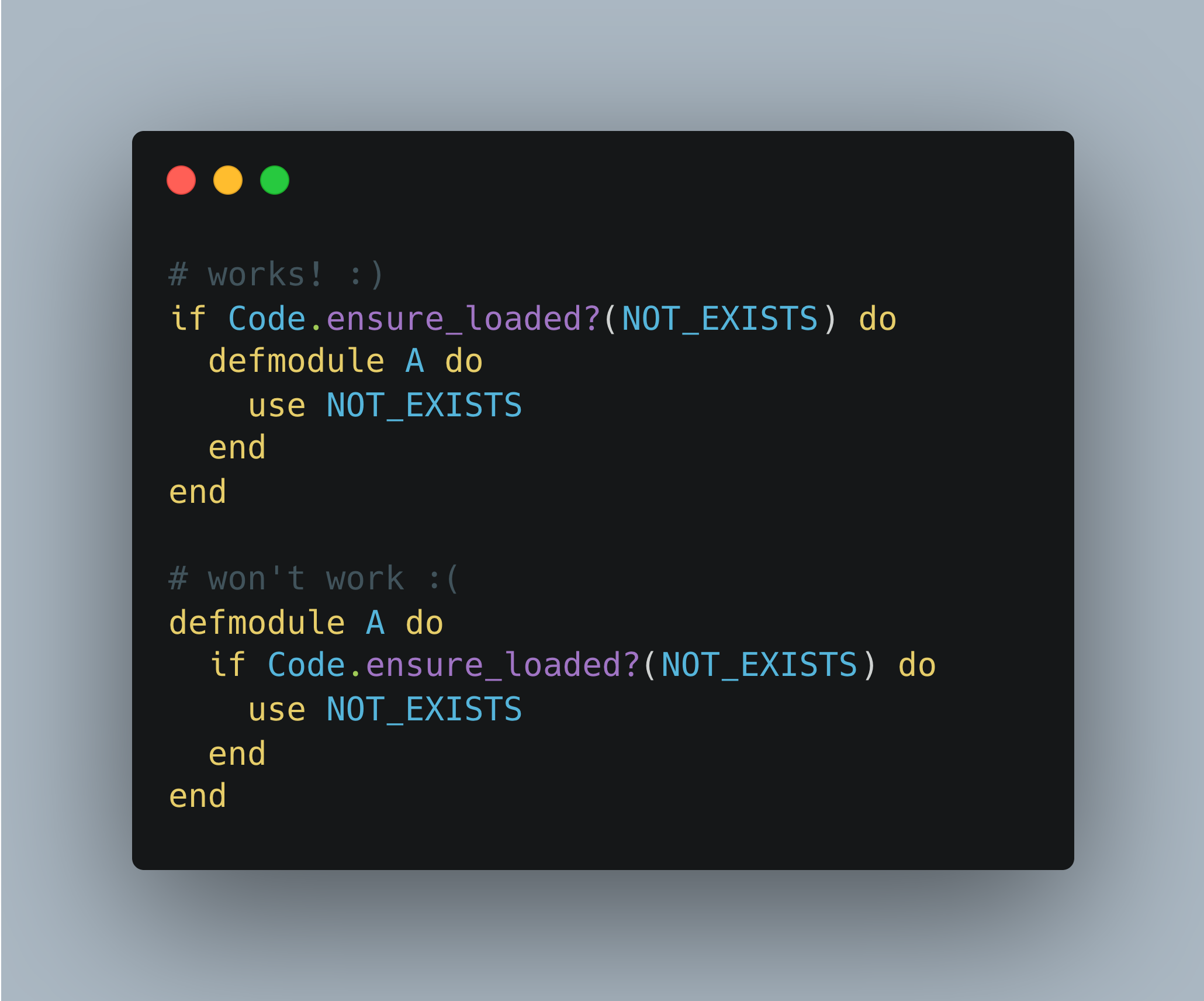

And require will fail even if the use-statement (or the require-statement, after the expansion) is technically inside the false branch of an if-statement, and even when the condition is absolutely false:

defmodule A do

if false do

use NOT_EXISTS

end

end$ mix run --no-mix-exs absolutely-false.exs

** (CompileError) absolutely-false.exs:3: module NOT_EXISTS is not loaded and could not be found

(elixir 1.14.0) expanding macro: Kernel.use/1

example.exs:3: A (module)

(elixir 1.14.0) expanding macro: Kernel.if/2

example.exs:2: A (module)Any Solution to This?

The simplest way is, of course, listing :kino as a required dependency, but I found another way to solve this issue while keeping :kino as an optional dependency.

We just saw that the following code would cause a compilation error,

defmodule A do

if false do

use NOT_EXISTS

end

endbut if we slightly re-arrange these three macros (yes, defmodule, if and use they are all macros), we can achieve what we want:

if false do

defmodule A do

use NOT_EXISTS

end

endAs for evision, we can change the code from

defmodule Evision.SmartCell.ML.TrainData do

if Code.ensure_loaded?(Kino.SmartCell) do

use Kino.JS, assets_path: "lib/assets"

# ...

end

endto the following

if Code.ensure_loaded?(Kino.SmartCell) do

defmodule Evision.SmartCell.ML.TrainData do

use Kino.JS, assets_path: "lib/assets"

# ...

end

endAnd...problem solved! However, this post will not end here without knowing its reasons -- why this happened in the first place? why it works after re-arranging if, defmodule and use.

# The Reasons and Explanations

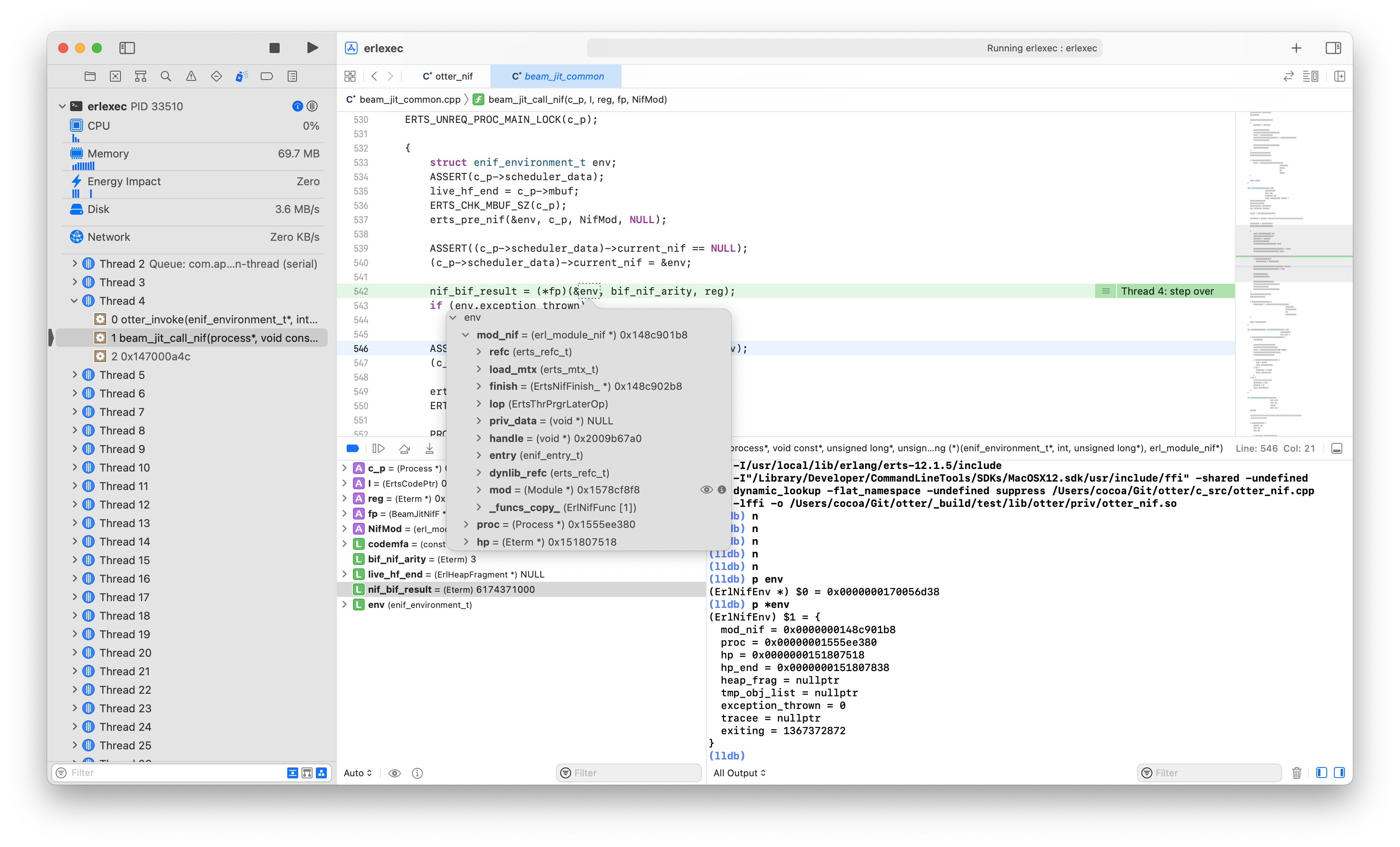

After digging into Elixir's source code for a few hours, I found some clues that might be related to this. Since we placed the if-statement first and it solved the problem, I was guessing that it might have something to do with the Elixir compiler and how the compiler behaves when traversing the AST (abstract syntax tree).

- The first clue is about macro expansion, like when macros are expanded by the compiler.

- The second one is the

optimize_boolean/1function inbuild_if/2inkernel.ex. Maybe theif-statement here didn't get optimised, which could cause the compilation error. - The last clue I suspected is

allows_fast_compilation/1fordefmodule, inelixir_compiler.erl. Because the name of this function is kinda sus, and based on the name, it seems to allow the compiler to skip/defer the evaluation ofdefmodule.

allows_fast_compilation({defmodule, _, [_, [{do, _}]]}) ->

true;Then I decided to ask @josevalim about this question, and he replied kindly, and his answers solved this puzzle. I'll now connect all the dots along with his answers and explain this mystery below.

A big thanks to José!

In Elixir's source code (v1.14.1), defmodule is defined in kernel.ex,

defmacro defmodule(alias, do_block) use is in kernel.ex too

defmacro use(module, opts \\ [])and, of course, as spoiled above, if is also a macro

defmacro if(condition, clauses)And macros are always expanded before the branches (e.g., an if-statement has two branches, true and false) are evaluated. That is to say, when the compiler sees the following code

defmodule A do

if false do

use NOT_EXISTS

end

endIt will expand the macros first:

defmodule A do

if false do

require NOT_EXISTS

NOT_EXISTS.__using__([])

end

endBut it will fail when evaluating require NOT_EXISTS because the module does not exist. We can see this based on the traced stack:

When the compiler sees an if-statement, it will go inside and expand macros (if any). That's why the Kernel.use/1 is on top of Kernel.if/2 in the traced stack:

$ mix run --no-mix-exs absolutely-false.exs

** (CompileError) absolutely-false.exs:3: module NOT_EXISTS is not loaded and could not be found

(elixir 1.14.0) expanding macro: Kernel.use/1

example.exs:3: A (module)

(elixir 1.14.0) expanding macro: Kernel.if/2

example.exs:2: A (module)And then I asked why putting if before defmodule will not cause the same compilation error. And José answered:

Because there are a few things that delay macro expansion, such as defining new modules or defining functions. Because you can do this:

module = Foo

defmodule module do

...

endTherefore, to define the module, you must execute the line that defines it. So only after you execute the line, the macros are expanded. defmodule A do ... end compiles to something like this:

:elixir_module.compile(A, ast_of_the_module_body)that AST will only be expanded if the module is in fact defined.

Therefore, the following code

defmodule A do

if false do

use NOT_EXISTS

end

endis first compiled to the following quoted code in kernel.ex

quote do

unquote(with_alias)

:elixir_module.compile(unquote(expanded), unquote(escaped), unquote(module_vars), __ENV__)

endAnd this emitted code will be executed because the defmodule in this example is unconditionally written in the source code instead of existing in the false branch of an if-statement. When :elixir_module.compile/4 is invoked, the if-statement will be evaluated, and the use macro will be expanded during the evaluation process of if.

Again, we can verify this by looking at the stacktrace:

$ mix run --no-mix-exs absolutely-false.exs

** (CompileError) absolutely-false.exs:3: module NOT_EXISTS is not loaded and could not be found

(elixir 1.14.0) expanding macro: Kernel.use/1

example.exs:3: A (module)

(elixir 1.14.0) expanding macro: Kernel.if/2

example.exs:2: A (module)Searching is not loaded and could not be found in Elixir's source code will bring us to format_error/1 in elixir_aliases.erl.

format_error({unloaded_module, Module}) ->

io_lib:format("module ~ts is not loaded and could not be found", [inspect(Module)]);Since unloaded_module is an atom, we can search it and trace back where it was produced. And it only appears in the same file in ensure_loaded/3.

ensure_loaded(Meta, Module, E) ->

case code:ensure_loaded(Module) of

{module, Module} ->

ok;

_ ->

case wait_for_module(Module) of

found ->

ok;

Wait ->

Kind = case lists:member(Module, ?key(E, context_modules)) of

true ->

case ?key(E, module) of

Module -> circular_module;

_ -> scheduled_module

end;

false when Wait == deadlock ->

deadlock_module;

false ->

unloaded_module

end,

elixir_errors:form_error(Meta, E, ?MODULE, {Kind, Module})

end

end.This function will first check if the module is already loaded, if not, it will invoke wait_for_module/1 and wait for the module to be compiled if that module exists and can be compiled.

wait_for_module(Module) ->

case erlang:get(elixir_compiler_info) of

undefined -> not_found;

_ -> 'Elixir.Kernel.ErrorHandler':ensure_compiled(Module, module, hard)

end.Yet obviously module NOT_EXISTS does not exist, and 'Elixir.Kernel.ErrorHandler':ensure_compiled/3 will return unloaded_module, which will be subsequently passed to elixir_errors:form_error/4.

form_error(Meta, #{file := File}, Module, Desc) ->

compile_error(Meta, File, Module:format_error(Desc));

form_error(Meta, File, Module, Desc) ->

compile_error(Meta, File, Module:format_error(Desc)).and become the compilation error message.

** (CompileError) absolutely-false.exs:3: module NOT_EXISTS is not loaded and could not be foundAnd to dig deeper, we can search :ensure_loaded in the code base and the one in elixir_expand.erl invoked in function expand/3 are what we are interested in.

expand({require, Meta, [Ref, Opts]}, S, E) ->

assert_no_match_or_guard_scope(Meta, "require", S, E),

{ERef, SR, ER} = expand_without_aliases_report(Ref, S, E),

{EOpts, ST, ET} = expand_opts(Meta, require, [as, warn], no_alias_opts(Opts), SR, ER),

if

is_atom(ERef) ->

elixir_aliases:ensure_loaded(Meta, ERef, ET),

{ERef, ST, expand_require(Meta, ERef, EOpts, ET)};

true ->

form_error(Meta, E, ?MODULE, {expected_compile_time_module, require, Ref})

end;And this is exactly where the compilation error happens.

defmodule A do

if false do

require NOT_EXISTS # <= elixir_aliases:ensure_loaded(Meta, ERef, ET),

NOT_EXISTS.__using__([])

end

endAnd we can continue to search elixir_expand:expand in the source code, and we will find the function expand_quoted/7 in elixir_dispatch.erl.

expand_quoted(Meta, Receiver, Name, Arity, Quoted, S, E) ->

Next = elixir_module:next_counter(?key(E, module)),

try

ToExpand = elixir_quote:linify_with_context_counter(Meta, {Receiver, Next}, Quoted),

elixir_expand:expand(ToExpand, S, E)

catch

Kind:Reason:Stacktrace ->

MFA = {Receiver, elixir_utils:macro_name(Name), Arity+1},

Info = [{Receiver, Name, Arity, [{file, "expanding macro"}]}, caller(?line(Meta), E)],

erlang:raise(Kind, Reason, prune_stacktrace(Stacktrace, MFA, Info, error))

end.Note that the Info on line 248 will become

...

(elixir 1.14.0) expanding macro: Kernel.use/1

...

(elixir 1.14.0) expanding macro: Kernel.if/2

...in the stacktrace. One more step and we will find that expand_quoted/7 is called in two dispatch functions: dispatch_import/6 and dispatch_require/7 in the same file.

dispatch_require(Meta, Receiver, Name, Args, S, E, Callback) when is_atom(Receiver) ->

Arity = length(Args),

case elixir_rewrite:inline(Receiver, Name, Arity) of

{AR, AN} ->

Callback(AR, AN, Args);

false ->

case expand_require(Meta, Receiver, {Name, Arity}, Args, S, E) of

{ok, Receiver, Quoted} -> expand_quoted(Meta, Receiver, Name, Arity, Quoted, S, E);

error -> Callback(Receiver, Name, Args)

end

end;

dispatch_require(_Meta, Receiver, Name, Args, _S, _E, Callback) ->

Callback(Receiver, Name, Args).As for elixir_dispatch:dispatch_require/7, it is invoked in two places: the first one is when processing remote calls in elixir_expand:expand/3. A remote call is to invoke/call a function in another module other than the current one. For example,

defmodule A do

def foo, do: :ok

def bar do

# this is a local call

foo()

end

end

defmodule B do

def baz do

# this is a remote call

A.foo()

end

endThe second call to elixir_dispatch:dispatch_require/7 can be found in elixir_module:expand_callback/6.

expand_callback(Line, M, F, Args, Acc, Fun) ->

E = elixir_env:reset_vars(Acc),

S = elixir_env:env_to_ex(E),

Meta = [{line, Line}, {required, true}],

{EE, _S, ET} =

elixir_dispatch:dispatch_require(Meta, M, F, Args, S, E, fun(AM, AF, AA) ->

Fun(AM, AF, AA),

{ok, S, E}

end),

if

is_atom(EE) ->

ET;

true ->

try

{_Value, _Binding, EF} = elixir:eval_forms(EE, [], ET),

EF

catch

Kind:Reason:Stacktrace ->

Info = {M, F, length(Args), location(Line, E)},

erlang:raise(Kind, Reason, prune_stacktrace(Info, Stacktrace))

end

end.elixir_module:expand_callback/6 is used in two places: the first one is in Protocol.derive/5, and as the module name suggested, it is related to deriving a protocol for a module.

We can go off on a tangent here and explore what will happen if we want to derive a protocol optionally. You can skip this and jump to the second call to elixir_module:expand_callback/6.

Let's say we have already defined a protocol Derivable as the following:

defprotocol Derivable do

def ok(arg)

end

defimpl Derivable, for: Any do

defmacro __deriving__(module, struct, options) do

quote do

defimpl Derivable, for: unquote(module) do

def ok(arg) do

{:ok, arg, unquote(Macro.escape(struct)), unquote(options)}

end

end

end

end

def ok(arg) do

{:ok, arg}

end

endThen there are three ways to derive a protocol for a module, and we can start with the simplest one:

- Deriving a protocol using the

@derivetag.

defmodule A do

@derive {Derivable, option: :some_value}

defstruct a: 0, b: 0

end- The second way is using the

defimpl/3macro inkernel.ex.

defmodule A do

defimpl Derivable do

def ok(_arg), do: :my_args

end

end- The last way to do this explicitly by API via

Protocol.derive/3

defmodule A do

defstruct a: 0, b: 0

require Protocol

Protocol.derive(Derivable, A, option: :some_value)

endWhat will happen if we'd like to derive a protocol from an optional module?

defmodule A do

if Code.ensure_loaded?(NOT_EXISTS) do

@derive {NOT_EXISTS, option: :some_value}

end

defstruct a: 0, b: 0

endThat's the first one, and the second one is shown below

defmodule A do

if Code.ensure_loaded?(NOT_EXISTS) do

defimpl NOT_EXISTS, for: A do

end

end

defstruct a: 0, b: 0

endAnd the last one

defmodule A do

defstruct a: 0, b: 0

if Code.ensure_loaded?(NOT_EXISTS) do

require Protocol

Protocol.derive(NOT_EXISTS, A, option: :some_value)

end

endAll three examples that optionally derive a protocol from an optional module will compile without any issues and behave as expected. Of course, we'd like to ask the question -- why can we use if inside the defmodule to optionally derive a protocol?

Let me explain the reasons for the first way first. The @derive tag is a module attribute, and @derive tags in the same module will be collected accumulatively in a bag -- simply put, they will be stored in a list. Then the defstruct/1 macro will retrieve them from Kernel.Utils.defstruct/3 and rewrite them with Protocol.__derive__/3. (This also explains why all @derive tags must be set before defstruct/1)

defmacro defstruct(fields) do

quote bind_quoted: [fields: fields, bootstrapped?: bootstrapped?(Enum)] do

{struct, derive, kv, body} = Kernel.Utils.defstruct(__MODULE__, fields, bootstrapped?)

case derive do

[] -> :ok

_ -> Protocol.__derive__(derive, __MODULE__, __ENV__)

end

def __struct__(), do: @__struct__

def __struct__(unquote(kv)), do: unquote(body)

Kernel.Utils.announce_struct(__MODULE__)

struct

end

endSince Protocol.__derive__/3 is a function instead of a macro, it will not be evaluated/expanded when processing the branches of an if-statement. Therefore, the following code will compile and run as expected.

defmodule A do

if Code.ensure_loaded?(NOT_EXISTS) do

@derive {NOT_EXISTS, option: :some_value}

end

defstruct a: 0, b: 0

endAs for the second way that uses the defimpl/3 macro in kernel.ex, although defimpl/3 is a macro, it simply rewrites the code to call the Protocol.__impl__/4 function.

defmacro defimpl(name, opts, do_block \\ []) do

Protocol.__impl__(name, opts, do_block, __CALLER__)

endSo, it's similar to the first one, the call to the function Protocol.__impl__/4 will not be evaluated when processing the branches of an if-statement.

The same reason goes for the last one: Protocol.derive/3 is a function, so it will not be evaluated when processing the branches of an if-statement.

Let's get back on track. The second call to elixir_module:expand_callback/6 can be found in eval_callbacks/5 in elixir_module.erl.

eval_callbacks(Line, DataBag, Name, Args, E) ->

Callbacks = bag_lookup_element(DataBag, {accumulate, Name}, 2),

lists:foldl(fun({M, F}, Acc) ->

expand_callback(Line, M, F, Args, Acc, fun(AM, AF, AA) -> apply(AM, AF, AA) end)

end, E, Callbacks).eval_callbacks/5 is called in the same file in two different functions (actually one function, depending on how you see this). The first line it appears in the file is inside the compile/5 function (line 161). The second time it appears is in the eval_form/6 function (line 378).

However, eval_form/6 is only called once from the compile/5 function. The obvious difference is that the third argument passed by eval_form/6 is before_compile whereas compile/5 passes after_compile. So I guess you could say that eval_callbacks/5 is called in one function, compile/5.

compile(Line, Module, Block, Vars, E) ->

File = ?key(E, file),

check_module_availability(Line, File, Module),

ModuleAsCharlist = validate_module_name(Line, File, Module),

CompilerModules = compiler_modules(),

{Tables, Ref} = build(Line, File, Module),

{DataSet, DataBag} = Tables,

try

put_compiler_modules([Module | CompilerModules]),

{Result, NE} = eval_form(Line, Module, DataBag, Block, Vars, E),

CheckerInfo = checker_info(),

...Based on the value of the third argument, and what we have is a compilation error, we can confirm that the compilation error is thrown when calling eval_form/6 with the third argument as before_compile.

And we are very close to connecting all the dots: eval_form/6 is called from elixir_module:compile/5, and elixir_module:compile/5 is called in elixir_module:compile/4, which is exactly the code that defmodule rewrites to!

So, if eval_callbacks/5 in eval_form/6 is successfully executed, and there are no other errors, we can expect the optimize_boolean/1 function in build_if/2

Connecting the Dots

Let's first reproduce the whole process with the code that will cause a compilation error.

defmodule A do

if false do

use NOT_EXISTS

end

endAnd we start from the shell command mix run --no-mix-exs absolutely-false.exs. The entry point for mix run [argv...] is Mix.Tasks.Run.run/1, and it will parse command line arguments and call run/5.

def run(args) do

{opts, head} =

OptionParser.parse_head!(

args,

aliases: [r: :require, p: :parallel, e: :eval, c: :config],

strict: [

parallel: :boolean,

require: :keep,

eval: :keep,

config: :keep,

mix_exs: :boolean,

halt: :boolean,

compile: :boolean,

deps_check: :boolean,

start: :boolean,

archives_check: :boolean,

elixir_version_check: :boolean,

parallel_require: :keep,

preload_modules: :boolean

]

)

run(args, opts, head, &Code.eval_string/1, &Code.require_file/1)

unless Keyword.get(opts, :halt, true), do: System.no_halt(true)

Mix.Task.reenable("run")

:ok

endIn this example, {opts, head} will be

{[mix_exs: false], ["absolutely-false.exs"]}In run/5, we can ignore other checks and focus on the call to the callback function file_evaulator.(file). And since file_evaulator is just &Code.require_file/1, we can jump to the function require_file/1 in the code.ex file.

def require_file(file, relative_to \\ nil) when is_binary(file) do

{charlist, file} = find_file!(file, relative_to)

case :elixir_code_server.call({:acquire, file}) do

:required ->

nil

:proceed ->

loaded =

Module.ParallelChecker.verify(fn ->

:elixir_compiler.string(charlist, file, fn _, _ -> :ok end)

end)

:elixir_code_server.cast({:required, file})

loaded

end

endfind_file!/2 expands the relative path to the absolute path of the file and reads the whole file into a char list. Then :elixir_code_server.call/1 will check if the file is already required (compiled), if yes, we will do nothing; if not compiled yet, :elixir_compiler.string/3 will be called to compile the code.

string(Contents, File, Callback) ->

Forms = elixir:'string_to_quoted!'(Contents, 1, 1, File, elixir_config:get(parser_options)),

quoted(Forms, File, Callback).elixir:'string_to_quoted!'/5 will pass the file content to the tokenizer and convert tokens to their quoted form.

'string_to_quoted!'(String, StartLine, StartColumn, File, Opts) ->

case string_to_tokens(String, StartLine, StartColumn, File, Opts) of

{ok, Tokens} ->

case tokens_to_quoted(Tokens, File, Opts) of

{ok, Forms} ->

Forms;

{error, {Meta, Error, Token}} ->

elixir_errors:parse_error(Meta, File, Error, Token, {String, StartLine, StartColumn})

end;

{error, {Meta, Error, Token}} ->

elixir_errors:parse_error(Meta, File, Error, Token, {String, StartLine, StartColumn})

end.The following AST will be emitted for this code:

{:defmodule, [line: 1],

[

{:__aliases__, [line: 1], [:A]},

[

do: {:if, [line: 2],

[

false,

[do: {:use, [line: 3], [{:__aliases__, [line: 3], [:NOT_EXISTS]}]}]

]}

]

]}Next, elixir_compiler:quoted/3 will process the quoted form -- evaluate or compile the code with the local lexical environment in eval_or_compile/3.

quoted(Forms, File, Callback) ->

Previous = get(elixir_module_binaries),

try

put(elixir_module_binaries, []),

Env = (elixir_env:new())#{line := 1, file := File, tracers := elixir_config:get(tracers)},

elixir_lexical:run(

Env,

fun (LexicalEnv) -> eval_or_compile(Forms, [], LexicalEnv) end,

fun (#{lexical_tracker := Pid}) -> Callback(File, Pid) end

),

lists:reverse(get(elixir_module_binaries))

after

put(elixir_module_binaries, Previous)

end.The implementation of eval_or_compile/3 is:

eval_or_compile(Forms, Args, E) ->

case (?key(E, module) == nil) andalso allows_fast_compilation(Forms) andalso

(not elixir_config:is_bootstrap()) of

true -> fast_compile(Forms, E);

false -> compile(Forms, Args, E)

end.allows_fast_compilations/1 will check if this file always defines a module, if yes, we can skip some steps in compile/3 and always define the module.

allows_fast_compilation({'__block__', _, Exprs}) ->

lists:all(fun allows_fast_compilation/1, Exprs);

allows_fast_compilation({defmodule, _, [_, [{do, _}]]}) ->

true;

allows_fast_compilation(_) ->

false.And the AST shown above indeed matches the one in the middle, therefore, we can do fast_compile/2.

fast_compile({defmodule, Meta, [Mod, [{do, TailBlock}]]}, NoLineE) ->

E = NoLineE#{line := ?line(Meta)},

Block = {'__block__', Meta, [

{'=', Meta, [{result, Meta, ?MODULE}, TailBlock]},

{{'.', Meta, [elixir_utils, noop]}, Meta, []},

{result, Meta, ?MODULE}

]},

Expanded = case Mod of

{'__aliases__', _, _} ->

case elixir_aliases:expand_or_concat(Mod, E) of

Receiver when is_atom(Receiver) -> Receiver;

_ -> 'Elixir.Macro':expand(Mod, E)

end;

_ ->

'Elixir.Macro':expand(Mod, E)

end,

ContextModules = [Expanded | ?key(E, context_modules)],

elixir_module:compile(Expanded, Block, [], E#{context_modules := ContextModules}).After the pattern matching, we get

Meta = [line: 1]

Mod = {:__aliases__, [line: 1], [:A]}

TailBlock =

{:if, [line: 2],

[

false,

[do: {:use, [line: 3], [{:__aliases__, [line: 3], [:NOT_EXISTS]}]}]

]}Now we can jump right into elixir_module:compile/4 which will call elixir_module:compile/5. And expect the compilation error right after calling elixir_module:eval_form/6 in elixir_module:compile/5. And you know the rest of the story.

What about the code that can achieve our goal?

if false do

defmodule A do

use NOT_EXISTS

end

endThe AST of the above code is

{:if, [line: 1],

[

false,

[

do: {:defmodule, [line: 2],

[

{:__aliases__, [line: 2], [:A]},

[do: {:use, [line: 3], [{:__aliases__, [line: 3], [:NOT_EXISTS]}]}]

]}

]

]}And now the difference is that we cannot do fast_compile/2 because the file may not define a module. Therefore, now we will take the compile/3 route in eval_or_compile/3.

compile(Quoted, ArgsList, E) ->

{Expanded, SE, EE} = elixir_expand:expand(Quoted, elixir_env:env_to_ex(E), E),

elixir_env:check_unused_vars(SE, EE),

{Module, Fun, Purgeable} =

elixir_erl_compiler:spawn(fun() -> spawned_compile(Expanded, E) end),

Args = list_to_tuple(ArgsList),

{dispatch(Module, Fun, Args, Purgeable), EE}.And the variable Expanded will be something like this

{:case, [line: 1, optimize_boolean: true],

[

false,

[

do: [

{:->, [generated: true, line: 1], [[false], nil]},

{:->, [generated: true, line: 1],

[

[true],

{:__block__, [line: 2],

[

A,

{{:., [line: 2], [:elixir_module, :compile]}, [line: 2],

[

...

]}

]}

]}

]

]

]}As we can see from this intermediate output, optimize_boolean/1 has already been called and gives us the tuple {:case, [line: 1, optimize_boolean: true], [false, [do: ...]]}.

In spawned_compile/2, we will translate quoted elixir expressions and variables to the forms that erlang can compile them into .beam binary.

spawned_compile(ExExprs, #{line := Line, file := File} = E) ->

{Vars, S} = elixir_erl_var:from_env(E),

{ErlExprs, _} = elixir_erl_pass:translate(ExExprs, erl_anno:new(Line), S),

Module = retrieve_compiler_module(),

Fun = code_fun(?key(E, module)),

Forms = code_mod(Fun, ErlExprs, Line, File, Module, Vars),

{Module, Binary} = elixir_erl_compiler:noenv_forms(Forms, File, [nowarn_nomatch, no_bool_opt, no_ssa_opt]),

code:load_binary(Module, "", Binary),

{Module, Fun, is_purgeable(Module, Binary)}.In function is_purgeable/2 we test if the beam binary has any labeled locals,

is_purgeable(Module, Binary) ->

beam_lib:chunks(Binary, [labeled_locals]) == {ok, {Module, [{labeled_locals, []}]}}.If not, we can evaluate the code and return the evaluated result as the compiled output (in function dispatch/4, and dispatch/4 was called in the compile/3 route).

dispatch(Module, Fun, Args, Purgeable) ->

Res = Module:Fun(Args),

code:delete(Module),

Purgeable andalso code:purge(Module),

return_compiler_module(Module, Purgeable),

Res.In this example, Res will be nil because the condition used in the if-statement is false (and the false branch can be purged); meanwhile, as there is no true branch, the result of the true branch will be evaluated to the default value, nil.

Therefore, if we were using Code.ensure_loaded?/1 as the condition, it would also be evaluated to either true or false at compile-time. If it is false, then everything in the false branch will be purged, so we won't encounter any compilation errors for the following code.

if Code.ensure_loaded?(NOT_EXISTS) do

defmodule A do

use NOT_EXISTS

end

endwill be evaluated to

if false do

defmodule A do

use NOT_EXISTS

end

else

nil

end

# actually, it will be evaluated to something like this

case false do

false ->

defmodule A do

use NOT_EXISTS

end

true ->

nil

endand the false branch will be purged, which leaves us only nil

nil